Naomi Nakamura Hospitalized? The Johnny Sins Viral Hoax

The Narrative

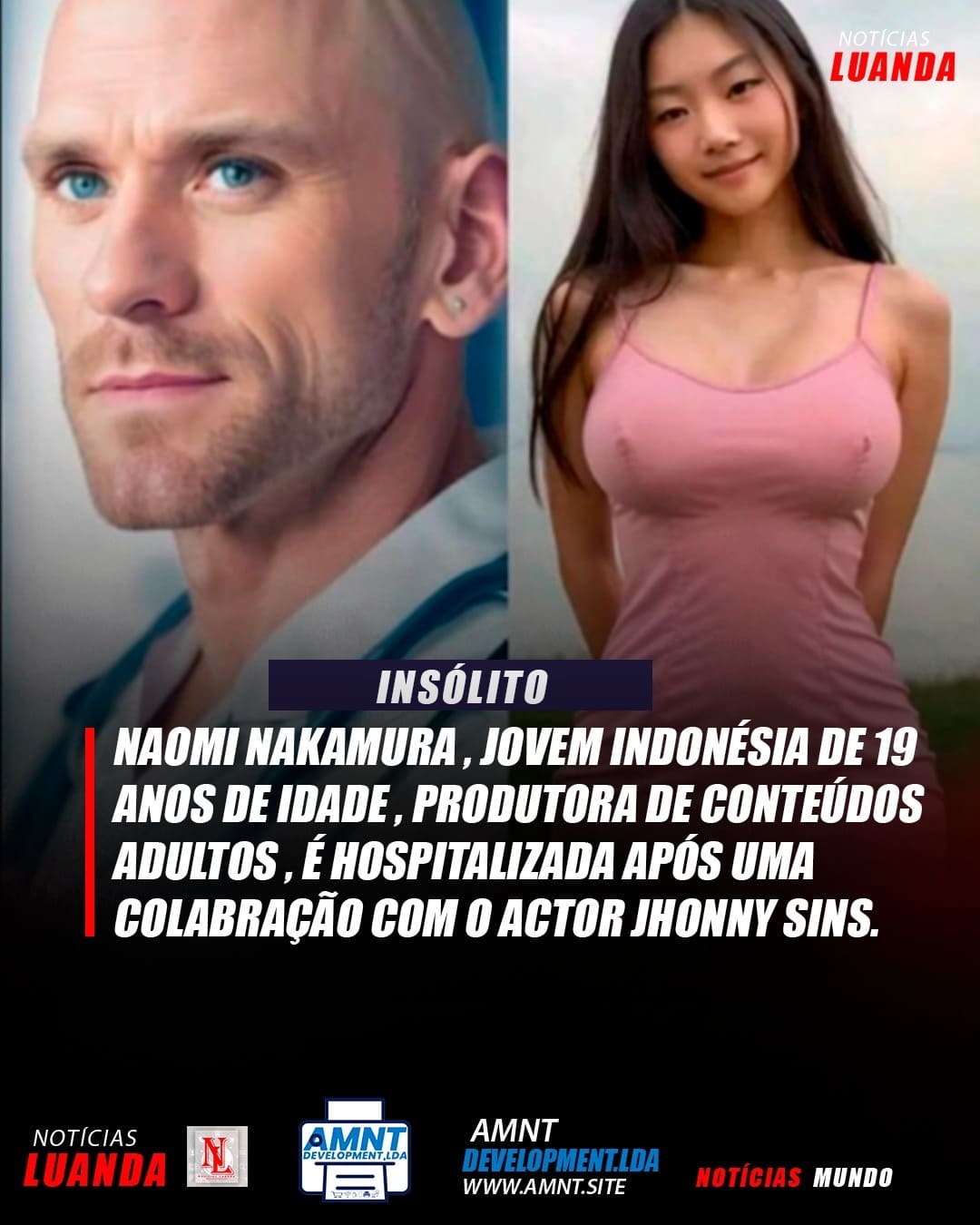

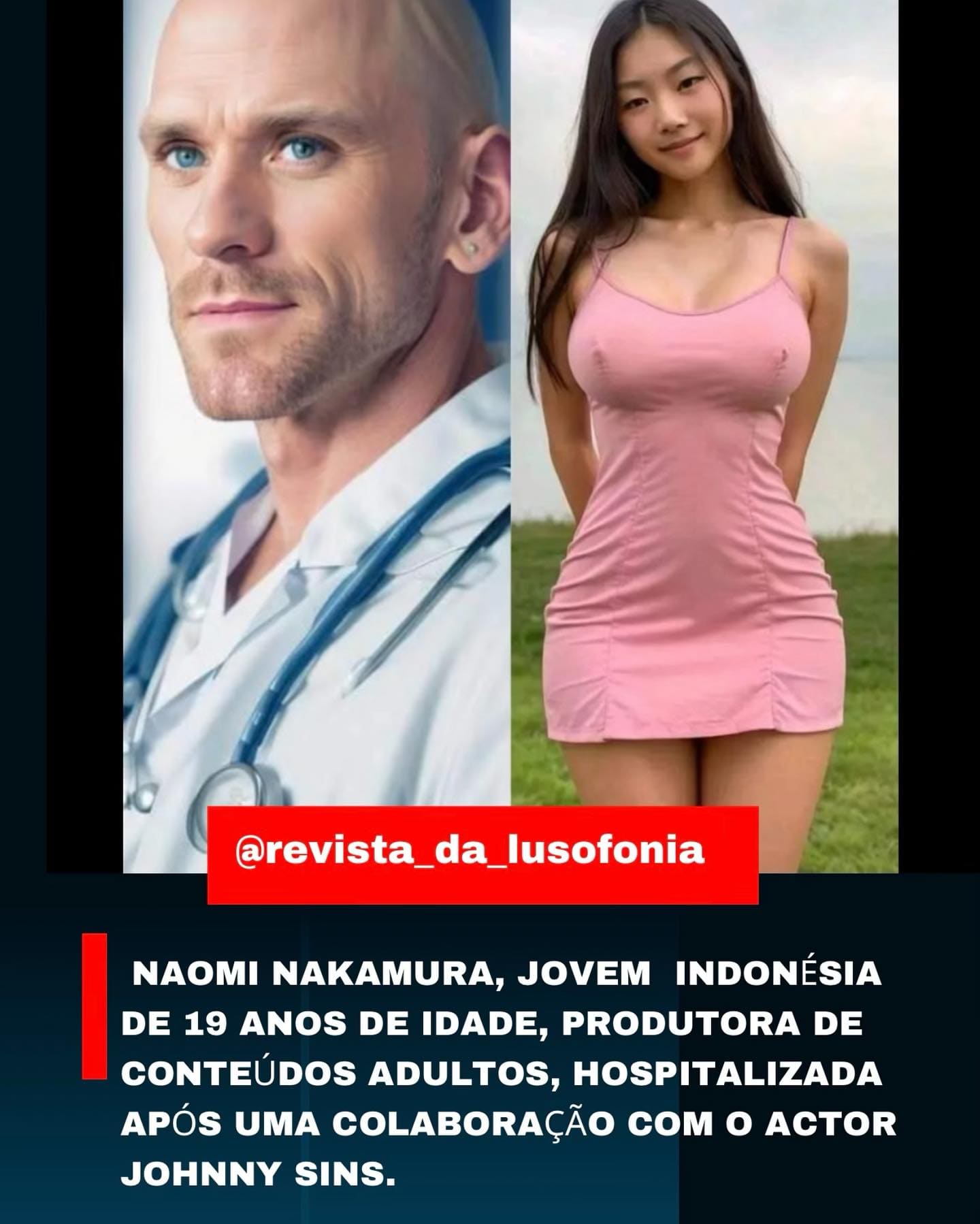

In recent weeks, a sensational story has crossed regional digital borders, appearing on various “news” and “lifestyle” social media pages. The claim states that a 19-year-old Indonesian adult content creator named Naomi Nakamura was hospitalised following a collaboration with industry veteran Johnny Sins.

The posts suggest a mysterious “incident” during filming, leaving the young woman under medical care. However, our investigation at Biasbreak.com confirms that this is not a news report — it is a sophisticated engagement bait campaign using AI-generated imagery.

Image 1 — Glamour shot (origin post)

Image 1 — Glamour shot (origin post)

Image 2

Image 2

Image 3

Image 3

Image 4 — Hospital context

Image 4 — Hospital context

Image 5 — Nasal cannula glitch

Image 5 — Nasal cannula glitch

Image 6 — Iris & earlobe inconsistency

Image 6 — Iris & earlobe inconsistency

Image 7

Image 7

Forensic Breakdown

1. The “Ghost” Persona

Extensive searches through industry databases, verified social media platforms, and Indonesian public records yield zero results for a “Naomi Nakamura” in this professional context.

The name is statistically generic, designed to sound authentic to a global audience while remaining impossible to verify. By pairing a non-existent person with a globally recognised figure (Johnny Sins), the hoax gains immediate traction through fame by association.

“Naomi Nakamura” has no TikTok, no X (Twitter), no LinkedIn, and no professional credits anywhere. She exists only within these seven images.

2. Visual Analysis: The AI Smoking Gun

Our pixel-level analysis of all seven images reveals clear hallmarks of Generative Artificial Intelligence:

| Image Category | Flaws Identified |

|---|---|

| Medical Context | Hospital equipment in Images 4, 5, and 6 lacks branding, specific wiring, or realistic medical interfaces. Lighting is “too perfect,” producing a cinematic, waxy sheen on the patient’s skin. Monitors display glowing blobs rather than actual heart-rate data. |

| Nasal Cannula | In Image 5, oxygen tubing appears to blend directly into the subject’s skin rather than resting on it — physically impossible in reality. |

| Anatomical Inconsistency | Subtle shifts in facial structure and eye shape occur across images. The earlobe shape and iris pattern change between Image 1 (glamour) and Image 6 (hospital). In reality, these are biological constants. |

| The Hyper-Realist Gloss | Images use a tell-tale AI prompt style (likely Photorealistic + 8K + soft bokeh) producing a subsurface scattering effect — skin looks like translucent wax rather than human epidermis. |

| Environmental Logic | Backgrounds are generic and “dreamlike,” lacking the organised clutter or specific local signage found in real hospitals in Indonesia or anywhere else. |

3. Exploiting Cognitive Bias

The viral success of this post relies on two specific psychological triggers:

4. The Comment Section Feedback Loop

The provided metadata shows hundreds of users reacting with humour or misogyny. This toxic engagement serves as the “fuel” for the hoax. High comment-to-view ratios tell Instagram’s algorithm that this is “valuable content,” forcing it onto the Explore pages of millions who don’t even follow the original accounts.

When a story this “big” has no coverage from legitimate news outlets, the source is almost always a fabrication. The complete absence of a second source is itself the verdict.

The Viral Architecture: How They Fooled the Algorithm

This hoax didn’t go viral by accident. It followed a specific blueprint used by “Engagement Farms” to generate revenue from regional ad networks.

- 1 The Name-Drop Strategy. By attaching Johnny Sins — a man who has become a global meme for “having every job” — the creators guaranteed that comments would flood in, regardless of whether anyone believed the story.

- 2 The Lusofonia Connection. Posts from noticias_luanda and revista_da_lusofonia target the Portuguese-speaking world (Angola, Mozambique, Brazil). Regional aggregators often have lower verification standards, making them the perfect “Patient Zero” for fake news entering the mainstream.

- 3 Zero Digital Footprint. In the age of social media, it is impossible for a “rising content creator” to have no traceable presence. This absence is not a mystery — it is the proof.

Media Literacy Takeaway

This case is a textbook example of a Cheapfake turned Deepfake. It uses high-quality AI images to provide “proof” for a story that never happened.

How to stay sharp:

- Reverse Image Search. If the image only appears on “meme” or “aggregator” pages, it is almost certainly fake.

- Verify the Source. Real medical incidents involving high-profile figures are reported by established news organisations, not anonymous Instagram accounts.

- Identify the AI Glow. Look for hyper-smooth skin, logically impossible medical props, and “dreamlike” backgrounds with no real-world signage.

BiasBreak Authenticity Scorecard

Our Verdict

There is no hospital record, no police report, and no person named Naomi Nakamura involved in this industry. This is a Ghost Story generated by AI to harvest likes, follows, and ad revenue from regional news pages.

Deep Dive: The Anatomy of a Digital Fabrication

🔬 Technical Forensics: The AI Fingerprints

Beyond the general look of the images, a pixel-level analysis reveals why all seven images are definitively synthetic:

🕸️ Viral Architecture: The Engagement Farm Blueprint

Regional platforms such as noticias_luanda and revista_da_lusofonia target the Portuguese-speaking world (Angola, Mozambique, Brazil). Their lower verification standards make them ideal “Patient Zero” vectors for fake stories crossing into global feeds.

High comment-to-view ratios — fuelled by jokes, not belief — signal “valuable content” to Instagram’s algorithm, forcing the post onto Explore pages of millions who do not follow the original accounts.

In 2024, it is impossible for a rising content creator to have zero presence. No TikTok. No X. No LinkedIn. No professional credits anywhere. A person with no digital shadow is not a private person — they are a fictional character.

Ask one question: “Is there a second source?”

If the only people talking about a “hospitalization” are meme pages and not

reputable news organisations or the individual’s own verified accounts —

you are looking at a fabrication. Full stop.

Run any story through BiasBreak’s free tools — bias detection, authenticity scoring, and sentiment analysis — in seconds.

Try BiasBreak free →